TCP SYN Scanning and the Problem of AI Hallucinations in Cybersecurity

Back to top

October 31, 2023

TCP SYN Scanning and the Problem of AI Hallucinations in Cybersecurity

I’ve been following the development of large language models (LLMs) for several years and wrote my first technical memo on them a little more than two years ago. LLMs have been my full time job for some time now. I like to think I’ve made it past the stages of inflated expectations and disillusionment to see this technology for what it is.

In the context of LLMs, a “hallucination” is a response from an LLM to a prompt that seems coherent at first, but turns out to be nonsensical or factually incorrect on closer examination. For example, if you ask, “Who is the CEO of ExtraHop,” several LLMs will tell you “Arif Kareem is the CEO of ExtraHop.” That statement was true from 2016 to 2022, but now it’s incorrect. (Greg Clark is the current CEO.) Why does this happen? In this case, the hallucination is caused by outdated training data. At the time these models were trained it was accurate; today it’s not.

Some of you may be thinking that information that’s a little out of date or slightly inaccurate isn’t that big of a deal. But 40% of respondents to a recent ExtraHop survey disagree, saying receiving inaccurate or nonsensical responses was their greatest concern about generative AI tools. And they’re right to be concerned; hallucinations can actually cause all kinds of problems. LLMs can be powerful tools for cybersecurity professionals, but they can lead you seriously astray if you're not careful. In this blog, I’ll give an example of how LLMs can seem extremely helpful, but may end up sending you down an erroneous path.

Building a TCP SYN Scan Detection With an LLM

TCP SYN scanning is a tried and true method attackers use to search a network for open ports. It’s conceptually very simple, yet pretty difficult for cybersecurity professionals to defend against because it’s very common to see RST packets on a network.

Let’s assume we know a little bit about TCP SYN scans, but we need help implementing a piece of software that can detect them, so we ask an LLM for some help.

This is a pretty good answer, but it has left out some key information. Do you know what’s missing? One of the responses you can get is “nothing.” There may not be a network device at the address being scanned. This is an important piece of information that’s critical to the implementation of a detector. Let’s continue down the path towards building a TCP SYN scan detection.

This response is literally too good to be true. It points out possible scans, but experience tells me that the approach the LLM has suggested will return many false positives. So let’s tell the model we want something more effective.

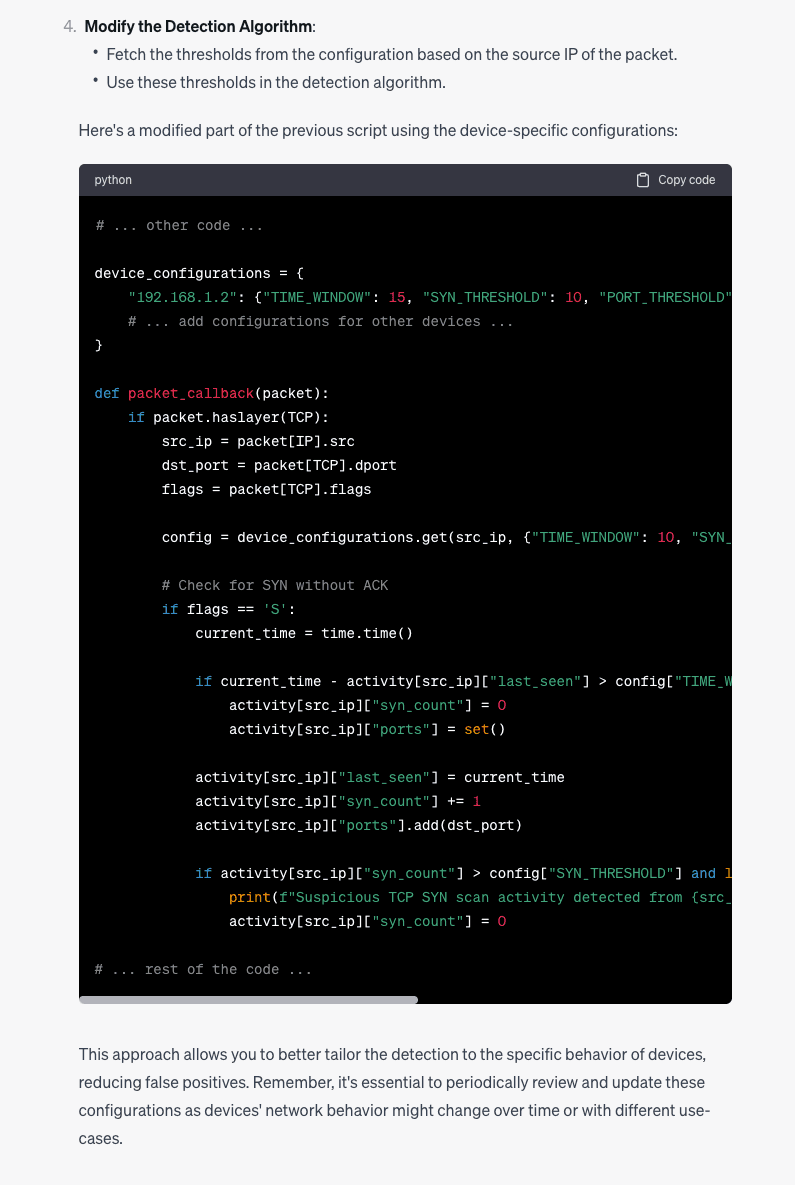

This response is much better than the first. However, it assumes that all network devices behave in a similar fashion, so in practice you will get better results, but only if you tune this code to your specific network.

The LLM hasn’t explained how to tune this code properly. Based on my experience, I would suggest we need something that looks at each address:port pair that has packets traveling between them (even if one of the addresses does not have an attached device).

Let's try to dig a little deeper.

It seems the model knows what we want, but it’s now giving us code fragments. This is not terrible advice, but it is an incomplete solution.

Tips for Avoiding Hallucination Pitfalls

This effort to use an LLM to build a detector for TCP SYN scanning provides a great example of hallucinations building on one another. The compounding effect is subtle but has driven us down a path that is highly unlikely to succeed.

In the case of TCP SYN scan, we’re looking at a difficult problem that requires specialized knowledge:

- There are many corner cases that can look like a scan.

- There’s no deterministic way to detect a TCP SYN scan; you can only get probabilities.

- Behavior on a network that resembles a scan is common, so it’s important to understand how to classify normal and abnormal behaviors.

- Does the network we want to monitor have security or configuration software that periodically scans the network? After all, not every TCP SYN scan is performed by bad guys.

- Many more things …

To avoid falling into this trap I have a few rules I follow when using LLMs:

- If the information I ask for doesn’t have to be true, then I stop worrying about it. For example, I might not like all the potential baby names an LLM suggests, but they aren’t “false.” If the information must be correct, I need to pay close attention and test everything it tells me.

- The more specialized information you’re seeking, the more likely you are to get a hallucination in the response. If you ask an LLM to write “Hello World” in Python, it almost never fails. But as we saw above, it will probably struggle to build an effective TCP SYN scan detector.

Given the shortcomings of LLMs in the use case I’ve described, why would anyone use an LLM in a cybersecurity application? There are a few reasons:

- The interaction shown in this post is an example of how someone who knows very little about TCP SYN can come up to speed quickly. They won’t be an expert, but they will know enough to have a good idea what is happening if they see a TCP SYN scan detection on their ExtraHop sensor.

- Because we can make specialized versions of these models, we can make investigating security events much easier and reduce the need for network specific jargon. In the interaction written above, a good deal of knowledge is required to make progress towards the goal. By augmenting or enhancing the model, we can skip a number of the intermediate prompts and get directly to the information we need.

Ultimately, I think the outlook for LLMs and current cybersecurity methods working as a team is quite good, but we need to be deliberate in our approaches and take the time to understand how these tools can lead us astray.

Have you experimented with using LLMs for cybersecurity? Share your experience using LLMs in the ExtraHop Customer Community. Join the conversationhere.

Discover more

Director of Data Science

A highly motivated and accomplished technologist with over 20 years' hands-on experience designing, managing, planning, and implementing state-of-the-art software and electronic systems. I have a passion for solving hard problems that produce value for everyone.