The Big Idea Behind IT Operations Analytics (ITOA): IT Big Data

Back to top

April 11, 2016

The Big Idea Behind IT Operations Analytics (ITOA): IT Big Data

What is IT Operations Analytics?

IT Operations Analytics (ITOA) is an approach that allows you to eradicate traditional data siloes using Big Data principles. By analyzing all your data via multiple sources including log, agent, and wire data, you'll be able to support proactive and data-driven operations with clear, contextualized operational intelligence:

This is the first post in a two-part series explaining how you can build an ITOA practice, including:

- How ITOA is data-driven and borrows from Big Data principles

- A taxonomy you can use to define ITOA data sets

- Purposes and outcomes for building an ITOA practice

- How to apply these principles in real-world workflows

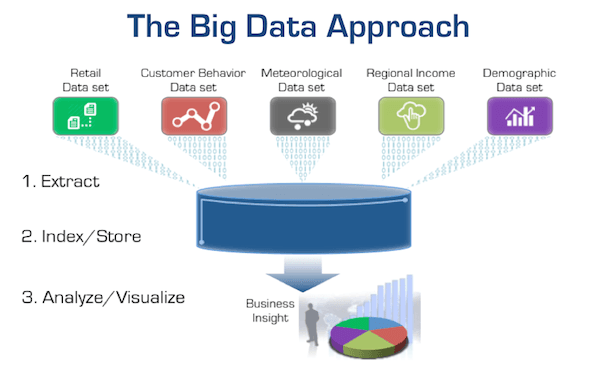

ITOA Uses Big Data Principles

To provide the greatest flexibility and avoid vendor lock-in, more organizations are adopting open source technologies like Elasticsearch, MongoDB, Spark, Cassandra, and Hadoop as their common data store. This same approach is at the heart of ITOA.

A Big Data practice provides the flexibility to combine and correlate different data sets and their sources to derive unexpected and new insights. The same objective applies to IT Operations Analytics.

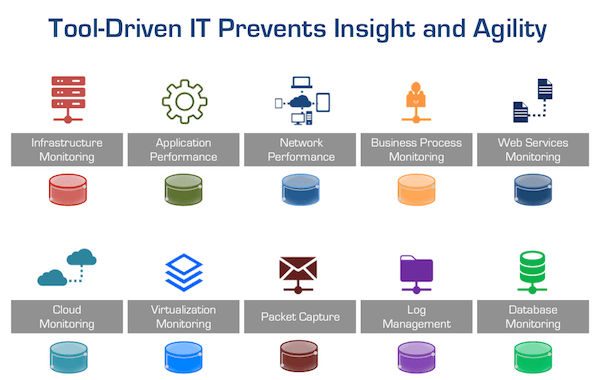

The Shift from Tools-Driven to Data-Driven IT

If the CIO wants their organization to be data-driven with the ability to provide better performance, availability, and security analysis while making more informed investment decisions, they must design a data-driven monitoring practice. This requires a shift in thinking from today's tool-centric approach to a data-driven model similar to a Big Data initiative.

Tool-centric approaches create data silos, tool bloat, and frequent cross-team dysfunction.

If the CISO wants better security insight, monitoring, and surveillance, they must think in terms of continuous pervasive monitoring and correlated data sources, not in terms of analyzing the data silos of log management, anomaly detection, packet capture systems, or malware monitoring systems.

If the VP of Application Development wants better cross-team collaboration, faster, more reliable and predictable application upgrades and rollouts for both on-premises and cloud-based workloads, they must have a continuous data-driven monitoring architecture and practice. The ITOA monitoring architecture must span the entire application delivery chain, not just the application stack. Because of all the workload interdependencies, without this data an organization will be flying blind resulting in project delays, capacity issues, cross-team dysfunction, and increased costs.

If the VP of Operations wants to cut their mean time to resolution (MTTR) in half, dramatically reduce downtime, and improve end-user experience while instituting a continuous improvement effort, they must have the ability to unify and analyze across operational data sets.

The CIO, security, application, network, and operations teams can achieve these objectives by drawing from the exact same data sets that are the foundation of ITOA. This effort should not be difficult, costly or take years to implement. In fact, this new data-driven approach to IT is actually being accomplished today; we're just codifying the design principles and practices we've observed and learned from our own customers who are the inspiration behind it.

Up Next: The Data Sets Used for ITOA

[1] Gartner: Apply IT Operations Analytics to Broader Datasets for Greater Business Insight, June 2014

Senior Vice President of Marketing and Business Development

Erik Giesa is the Senior Vice President of Marketing and Business Development at ExtraHop Networks. Prior to joining ExtraHop, Erik was Senior Vice President of Product Management and Product Marketing at F5 Networks where he defined product, marketing, and solution strategy for all F5 products.