What Is a Tinygram?

Back to top

September 21, 2015

What Is a Tinygram?

And what should you do about it?

If you're examining your network with an eye for improving performance, you're going to run into tinygrams eventually. I get questions about this all the time: "What are tinygrams? When do they arise? Are they a problem? What can I do about them?"

I'll take these one at a time.

What Is a Tinygram?

Tinygram is just another way to say "small packet." In general, small packets should be avoided if possible because they're very inefficient for the network. Tinygrams lead to inefficient ratios of frame header data to actual useful information going across the network. Every network is subject to conditions like congestion, TCP/IP overhead, and latency. Network admins strive to mitigate these conditions, and tinygrams are often part of the problem.

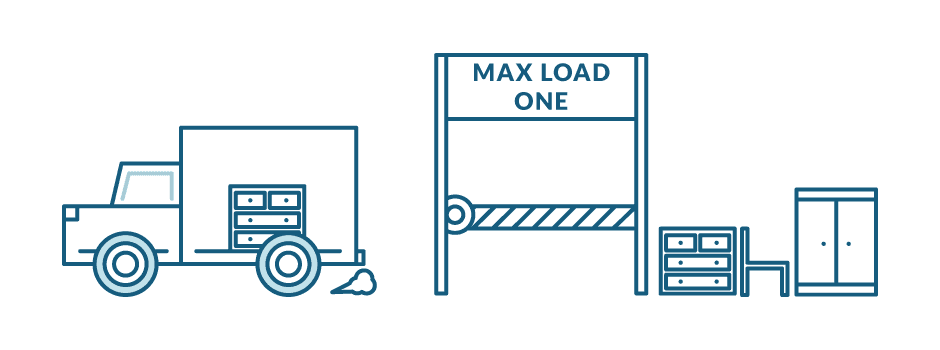

Consider a moving company that's helping clear out your old house. Imagine they've got a line of trucks waiting to haul furniture. Inside of each truck the movers only place a single piece of furniture, then send the truck off to deliver it to your swanky new pad. Obviously, for any given road along the path this could cause congestion - a bunch of trucks are all sent out to deliver furniture, with a payload size of one table or chair. Tinygrams are similar: they're TCP segments that could hold more data, but don't.

A tinygram is a TCP segment that could carry more data, but doesn't.

Clearly it would be more efficient for the movers to completely fill each truck and only then ship it off to the destination. This gives you more 'bandwidth' efficiency - more furniture (payload) is being moved per truck (packet) per round trip between the old place and the new one (latency).

When Do Tinygrams Arise?

The plot thickens in application-delivery world though. In most any environment you've got core services as well as adjuncts like intermediate proxies, load balancers, firewalls, etc. that can all affect the delivery of traffic. These services may each have different Maximum Transmission Units (MTUs) or other settings that limit the amount of data that can be sent across the network.

For example, if any part of your system has a restricted MTU that is too small, it can result in larger numbers of packets being transmitted, with disadvantageous ratios of frame header data to actual payload. It's like there's a city regulation that says you're only allowed to put one piece of furniture in any vehicle, even a huge moving truck.

By default, TCP uses Nagle's Algorithm to minimize network congestion due to tinygrams. The algorithm maximizes the efficiency and saves bandwidth by aggregating small packets into as few TCP segments as possible before allowing the segments to be sent. It tells the moving crew they can't drive away before filling up the truck.

In this analogy, limiting the MTU size sounds ridiculous, but sometimes you need smaller packet sizes or restricted MTUs. That depends on the unique variables in your environment, which brings us to the next question:

Are Tinygrams a Problem?

To know whether tinygrams are a problem in your application delivery environment, it's important to understand a few things:

- The types of traffic and the patterns of that traffic in your environment.

- Who the consumers of these application streams are, whether humans, machines, or other applications.

- The business requirements around the delivery of any specific application or service.

A single server could be responsible for several different types of traffic. Interactive sessions, bulk data loads, or others in between. Each one of these could warrant special treatment on the wire. It turns out that tinygrams may well be common, expected, or even required depending upon the type of application we're dealing with.

Let's look at an example of a situation where allowing tinygrams on your network is actually vital to creating a good user experience. Imagine a user logged into a virtual desktop infrastructure (VDI). The screen looks normal to them, but all the computing is happening in a data center far away, maybe in a different state. What happens when they move their mouse?

- The user's computer sends a series of coordinates corresponding with their cursor movement to the Virtual Desktop server, wherever it is hosted.

- The Virtual Desktop server processes the coordinates, performs the necessary computations, and sends signals back to the user's machine telling it where to display the cursor.

- The user's cursor moves in the way they expect it to, based on how they moved their physical mouse.

In this example, the amount of data that needs to be sent is tiny, but it needs to get there fast, otherwise the user's cursor movements will be slow or jumpy. That's a bad user experience, and people hate it. You have to allow the tinygrams containing the cursor coordinates through to keep users happy.

This presents IT teams with a difficult situation. Conserving bandwidth is usually a priority in network infrastructures, but there are times when doing so will be disastrous for user experience. You have to choose between speed and efficiency.

How ExtraHop Can Help

The ExtraHop wire data analytics platform recreates the TCP state machine for each endpoint communicating over the network, allowing it to surface all kinds of L4 metrics, including Nagle Delays and tinygrams. This lets you make informed, intelligent decisions about traffic policies, device configuration, or even application architectures themselves. For example, if you've got a highly interactive service like VDI, you'll often want to prioritize this traffic and allow tinygrams through without aggregating them to allow for smooth user experience. Likewise, if you're using a load balancer with a default configuration you'll often have stalls introduced by Nagle's algorithm if you're moving lots of small packets through the device.

The good news is that all of this is actionable. With real-time wire data analytics, it is easier to understand what's happening in your system, and to make informed choices to optimize your performance.

Want to explore some real-world examples of how wire data analytics can help you optimize VDI performance? Check out our free, interactive demo.

Discover more

VP of Security

Matt Cauthorn is responsible for all security implementations and leads a team of technical security engineers who work directly with customers and prospects. A passionate technologist and evangelist, Matt is often on site with customers working to solve the complex and mission-critical business problems that Fortune 1,000 and global 2,000 companies face. After years spent helping customers tap into the value offered by network-based analytics, Matt has been able to bring fresh thinking to security threat detection. Matt's collaborated with companies across various industries including banking, healthcare, energy, and retail. Prior to ExtraHop, Matt was a Sales Engineering Manager at F5 and before that he started his career in the trenches as a practitioner where he oversaw application hosting, infrastructure, and security for five international data centers.

Matt holds an MBA from Georgia State University and a Bachelor of Science degree from the University of Florida. Matt's a security thought leader and blogger, frequently speaking at industry events, in webinars, and is commonly quoted in industry coverage.

Connect with Matt on LinkedIn!